This is an assumptions that are almost always made in science fiction, and often made in non-fiction writing about AI.

- Human Essence Assumption: There is some essential aspect of a human being that gives rise to all the other important aspects of a human being.

I am interested in the straightforward way we understand and accept this assumption, before we have had a chance to examine it or question it, as we do when we are casually watching science fiction or reading the paper.

I’ve written eight-ish articles about the assumption. Read The Sixth Day first; I think it is easiest way to convince you that (1) you understand what I’m talking about, and (2) you have occasionally accepted the assumption without question, at least when you were watching a movie.

This article is mostly a way to try to keep my thinking straight. But it might also be interesting to you all to understand the scope of the argument and make clear what I’ve actually proved.

Seven Aspects

Notice the category-neutral term “aspect” in the assumption — I am not talking about abilities, or properties, or sentience, or a subjective self, I am talking about any and all of these. At once. It says: “All the important aspects.” The HEA asserts that all these (ontologically different) aspects have the same source, some singular essence.

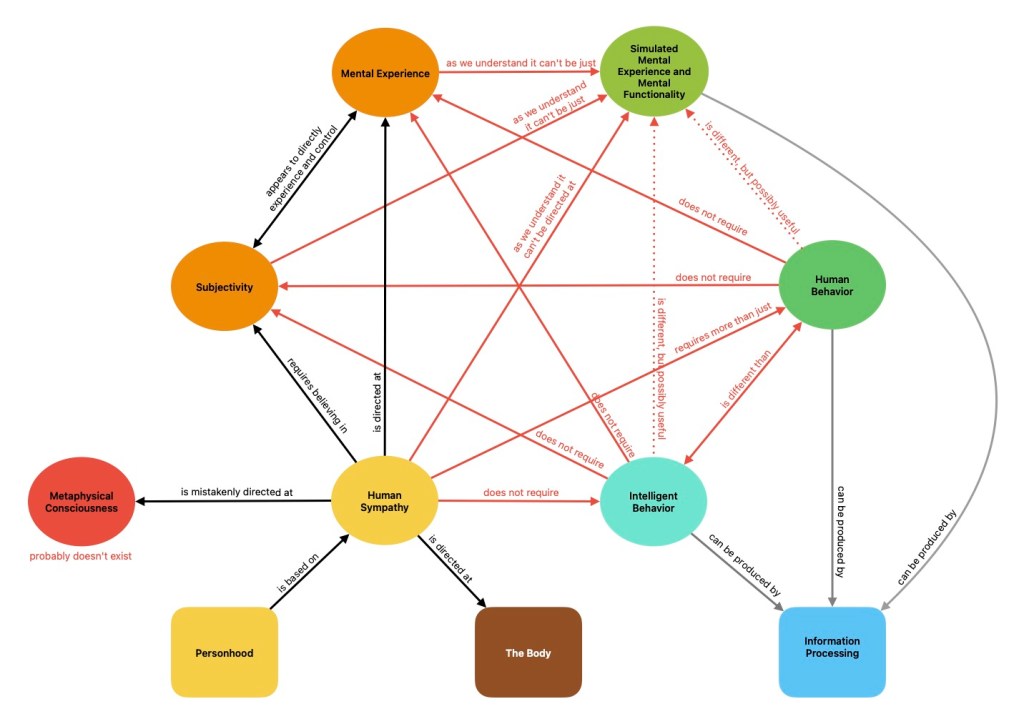

I think I can capture most or all of these “aspects” and potential “essences” in these seven categories.

- Human behavior. In The Tell, I discussed behaviors that demonstrate our “humanity”.

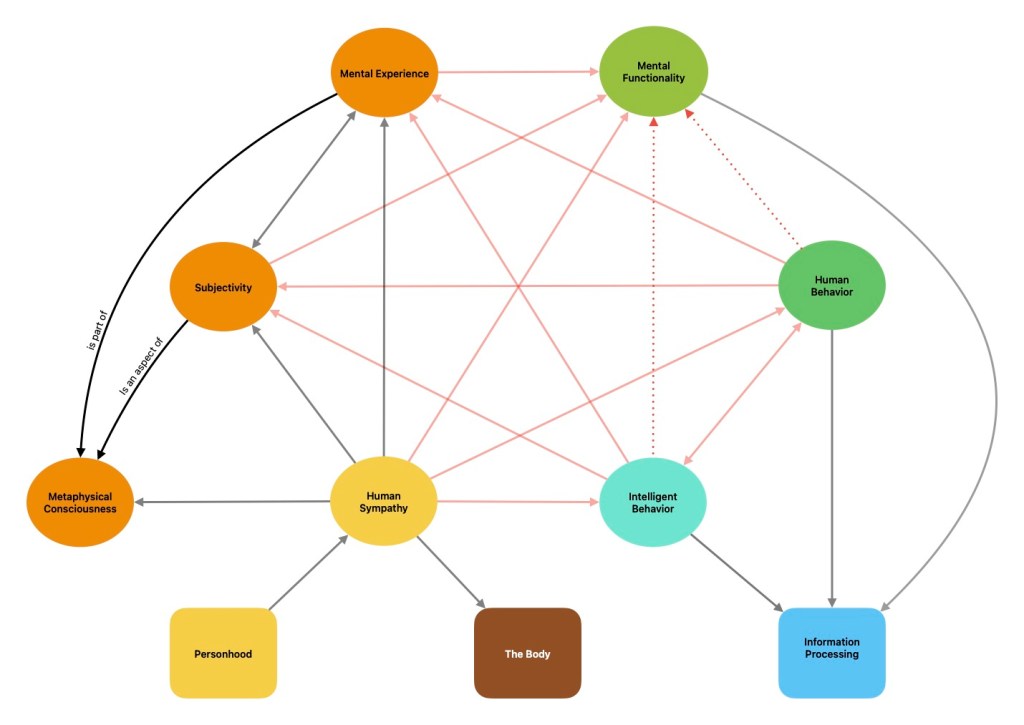

- Consciousness as a thing or “self“. Metaphysical objects and substance dualism is covered in Ghosts and Other Invisible Stuff.

- Subjectivity. What it feels like to perceive, think, feel and act from a first person point of view. The Paradox of Mary and Mark tried to convince you that you already know what “subjective consciousness” is. Also known as sentience, subjective consciousness, or subjective self-awareness.

- Mental Experience. This all the things that you experience as happening inside your mind: your train of thought, inner speech, attention, imagination, memory, thoughts, plans, etc.. All the contents of your consciousness, including everything that makes you be you. This is something that we are subjectively aware of and I discuss subjectivity in The Paradox of Mary and Mark

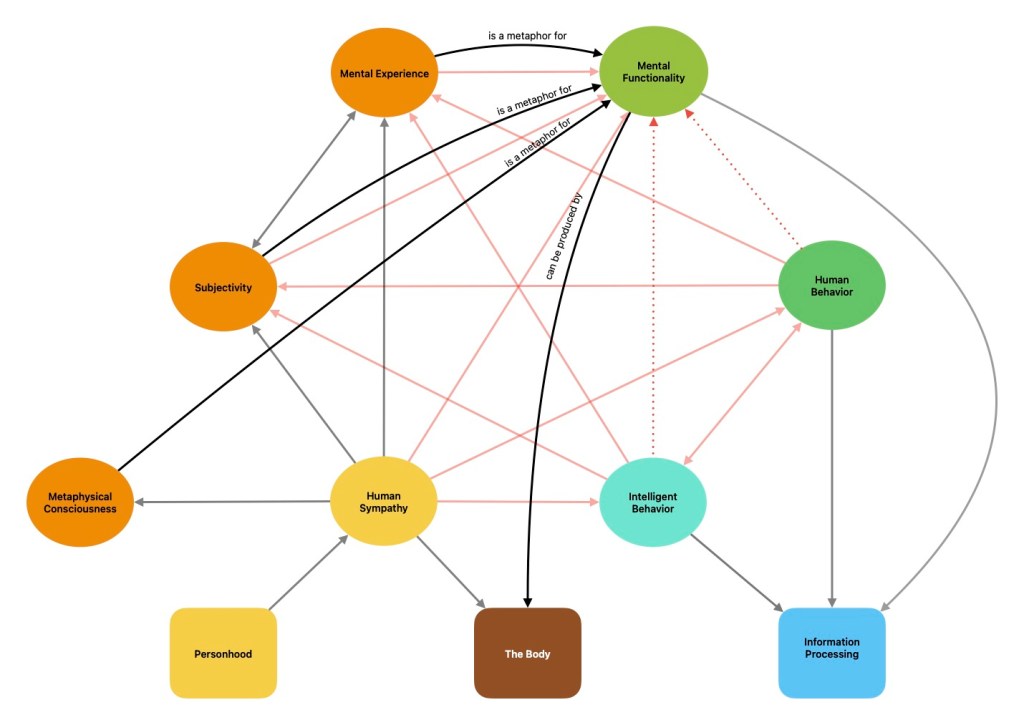

- Mental Functionality and Simulated Mental Experience. Classical AI simulated the way the mind uses mental objects like plans and goals (e.g. Newell & Simon’s means-ends-analysis). 20th century neuroscience has tried model what the brain is doing when we are “conscious of” something (e.g. Global Workspace Theory). These models are a form of information processing. I examine the relationship between these models and subjectivity in Characters, human sympathy in The Stepford Scenario and intelligence in The “I” in AI.

- Human Sympathy. We feel differently about “persons” than “machines”. The Stepford Scenario tried to determine what, exactly, it is that we care about. This is the central issue in most science fiction films about AI — in Considering Samantha I consider the role sympathy plays in the movie “Her”.

- Intelligent behavior. Today, in 2026, it’s common to refer the kind of intelligent behavior we’re talking about as “artificial general intelligence”. This is a behavior or ability — it’s something the thing can do. I talked about how the field of AI research defines intelligence in The “I” in AI.

A few observations.

No less than four of the aspects above are sometimes called “consciousness”. This makes “consciousness” a very misleading term when used casually.

The aspects above are all of a very different kind. We have an invisible thing, some behaviors, various types of information processing, an effect on people, and experiences. Their differences are intrinsic and significant. (Philosopher would say that they have a different “ontological status” or “mode of being”.)

Human behavior, intelligent behavior and simulated mental experience can all be produced by information processing. It’s the “same” information regardless of how it is represented, it’s the “same” behavior regardless of who or what is doing it. With these there is no difference between a simulation of a thing and the real thing.

Mental experience, subjectivity, metaphysical consciousness are problematic and their very existence leads to paradoxes.

Human sympathy is central to this whole subject. It is the ground of morality, law, relationships and society. We experience it very directly and believe in very deeply. No matter what else we discover, we have to acknowledge how human sympathy works and that it matters.

The Relationships

One key entailment of the HEA is there is a correlation between each pair of these things; if one of these aspects is the “essential” aspect, then we expect it to bring some or all of the others along with it. So the HEA implies a whole set of assertions like this:

- Emergence (Sufficiency I->C): Consciousness will emerge if superintelligence is present.

- Necessity C->I: In order to engineer superintelligence, we would have to engineer consciousness.

- The Tell (Sufficiency HB->HS): If a robot behaves like a person, then it should be considered a person.

- Amodei’s Error (Sufficiency MF->SC): If a robot has integrated information (ITT), then it has subjective consciousness.

The majority of my work in the previous six articles has been to disprove statements of this form, to show that various aspects aren’t necessary or sufficient for each other — that they different things an are, in general, independent of each other.

The HEA doesn’t require all of the entailments of this form, of course. It’s possible that any one of the seven things I picked isn’t connected to the human essence (e.g. if all else fails, we can always invoke the “AI Effect“). To disprove the HEA I have to show that a significant number of these things are unrelated, so many that there are only a few meaningless examples left.

I think I’ve done that. There are 7 “aspects”, which gives us 21 relationships. I’ve covered all of these relationships in the previous articles, except for a couple that are completely obvious. (E.g. human sympathy does not require intelligence; i.e. we love our children even if they are stupid. Enough said.)

Most of the differences immediately fall out and are easy to see. (And I hope you’ve been patient when I’ve belabored the obvious.) Normally the essence is unexamined and nebulous — even when it feels familiar and obvious — but as soon as you reach into the concept and begin to deconstruct it, it doesn’t take long to see that it was nonsense.

Hopefully, all these refutations will convince you that there is absolutely no evidence to support the human essence assumption.

Related Assumptions

- Chain of Being:

I have also been able to show that none of these is adequate as a “linear measure of all things”. This absolutely refutes the chain of being assumption.

- Paragon of Animals:

- Mysterianism: Science will never completely explain X, where X is some essential aspect of human being.

This is absolutely refuted (by [[CT+]]) for three of the aspects: intelligent behavior, human behavior and mental function. These are all things that can be simulated to a reasonable degree by a digital computer and there is no “other thing” — no other essential thing — that a machine needs to simulate these things.

There are limits to what AI and superintelligence can do, but they don’t come from the HEA; they come from physics and computer science (undecidability, NP-completeness, non-linear dynamics / “chaos” / “butterfly effect”, quantum indeterminacy, limits of data collection, speed of light, laws of thermodynamics, etc., etc.).

There are some paradoxes on the other four aspects, with subjectivity, mental experience, metaphysical consciousness and human sympathy. It’s beyond the scope of this project to try to solve these mysteries (although I hope I have framed the problems better, and eliminated the most naive answers).

Perhaps I should say (just so I said it at least once) that I believe that science can explain these, but I think it’s likely that we won’t like the answer, and that most people will go on believing in a version that is paradoxical.

- Forging the Gods

Two Other Theories of How This Works

Functionalism, computationalism and eliminative materialism all hold something like this:

Substance dualism works like this:

Some observations:

- EM implies that the “soul” or “subjectivity” are both, at best, just useful ways of looking at what’s happening in brains. Substance dualism says that both are aspects of metaphysical things. What’s interesting to me is this: in both case, metaphysical consciousness and subjectivity have the same status; that “souls” is not different than “subjective consciousness”, they play the same role, they are the same kind of thing.

- Neither does anything to rescue the human essence assumption. All the red links are still there. They are few more links between various aspects of consciousness, but this does nothing to pull the whole thing together — intelligence, for example, is still not connected to anything else.

One thought on “The Human Essence Assumption”