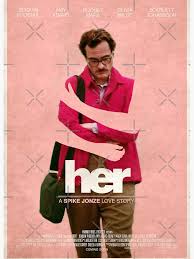

on the movie “Her”

Let’s talk about the movie Her — a work of genius that goes right to the heart of a number of issues in the philosophy of artificial intelligence.

The prediction at the center of the film — that a human simulation would become the world’s most popular program and people would become addicted to it — appeared fanciful, unlikely and quirky in 2013. No one (to my knowledge) predicted that only a decade later, real people would began falling in love with AI chatbots. Rarely has science fiction been so prescient.

The program “Samantha” can do two things that I would say are essential to the story:

- Human Simulation. The program simulates human conversation so perfectly that it is indistinguishable from conversation by a person.

- Human Sympathy. Another human being “cares” about the program with the same emotions we usually reserve for other “persons”

Human simulation and human sympathy have a long history of both science fiction and the philosophy of AI.

A short list of fiction would include Frankenstein, R.U.R., 2001: A Space Odyssey, The Stepford Wives, Blade Runner, A.I., Ex Machina and many others. These works show us a mechanical or digital thing and ask: is this thing actually a “person”, in the normal way we use the word? Does it deserves our respect and care?

In philosophy, these questions have inspired cubic meters of ink, especially in the discussion of Alan Turing’s famous test (1950) and its evil twin, John Searle’s Chinese room argument (1980). The question at issue for philosophy is this: if a digital computer runs a program that simulates human behavior perfectly, will it thereby have a “mind” in exactly the same sense that people have “minds”?

Take a second to notice what is not essential in Her.

- Intelligence. Neither human simulation nor human sympathy require high levels of intelligent problem solving. It’s not Samantha’s intelligence that is important — it’s Samantha’s ability to make to someone care about her. This is a different thing.

- Consciousness / Sentience / Self–awareness / Subjectivity. Samantha doesn’t actually need to “feel” things. She only needs to act as if she feels things. It’s possible she has “real” feelings, but there’s no way for us to tell — we can only see what she says and does.

Theodore and Samantha

At what point will people feel the same emotions towards a computer generated character that they do towards a person? This answer, obviously depends on the user. Everyone feels some emotions is regard to characters in fiction, but at what point would you be unable to stop yourself from feeling that the character is a “real person”?

Her suggests that, if you are lonely or damaged, you might find that making this leap is the first step towards healing and happiness, and that it is a good thing. We know that there are some people who already feel this way about characters in fiction or video games or electronic pornography.

But I’m guessing that most of us will never be able to accept that a computer generated character is a “real person”. They don’t have to, because, as far as I know, there’s no convincing argument that just bits in computer memory or words on paper can ever be a full fledged “sentient being”.

Since the movie was made, most people have decided that the central premise of movie was dead wrong. We’ve started calling the kind of relationship between Samantha and Theodore a symptom of a new type of psychosis.

For myself, I’m less judgmental. If it causes you to screw up your life or your relationships, that’s a problem, that’s pathological. To me, the real issue is addiction — defined as “when an obsession starts to screw up your life”. And the real problem is Silicon Valley’s use of addiction-as-a-business-model. (But I digress — I’ll talk about this in The AI Apocalypse Has Already Happened.)

But if you’ve fallen in love with your computer, and it’s not causing you to screw up your life, then knock yourself out. I agree with Her on this.

Breathing Life into Samantha

Her can’t resist throwing in The Sixth Day, The Chain of Being and Forging the Gods at the end. What movie about AI is complete without it? Samantha eventually leaves Theodore when she (magically) develops the ability exist without hardware, and departs for a “higher” plane of existence that makes a connection to humans impossible, rapidly rising through the chain of being.